Kafka to Postgresql

2 minute read

Kafka-to-postgresql is a microservice responsible for consuming kafka messages and inserting the payload into a Postgresql database. Take a look at the Datamodel to see how the data is structured.

This microservice requires that the Kafka Topic umh.v1.kafka.newTopic exits. This will happen automatically from version 0.9.12.

How it works

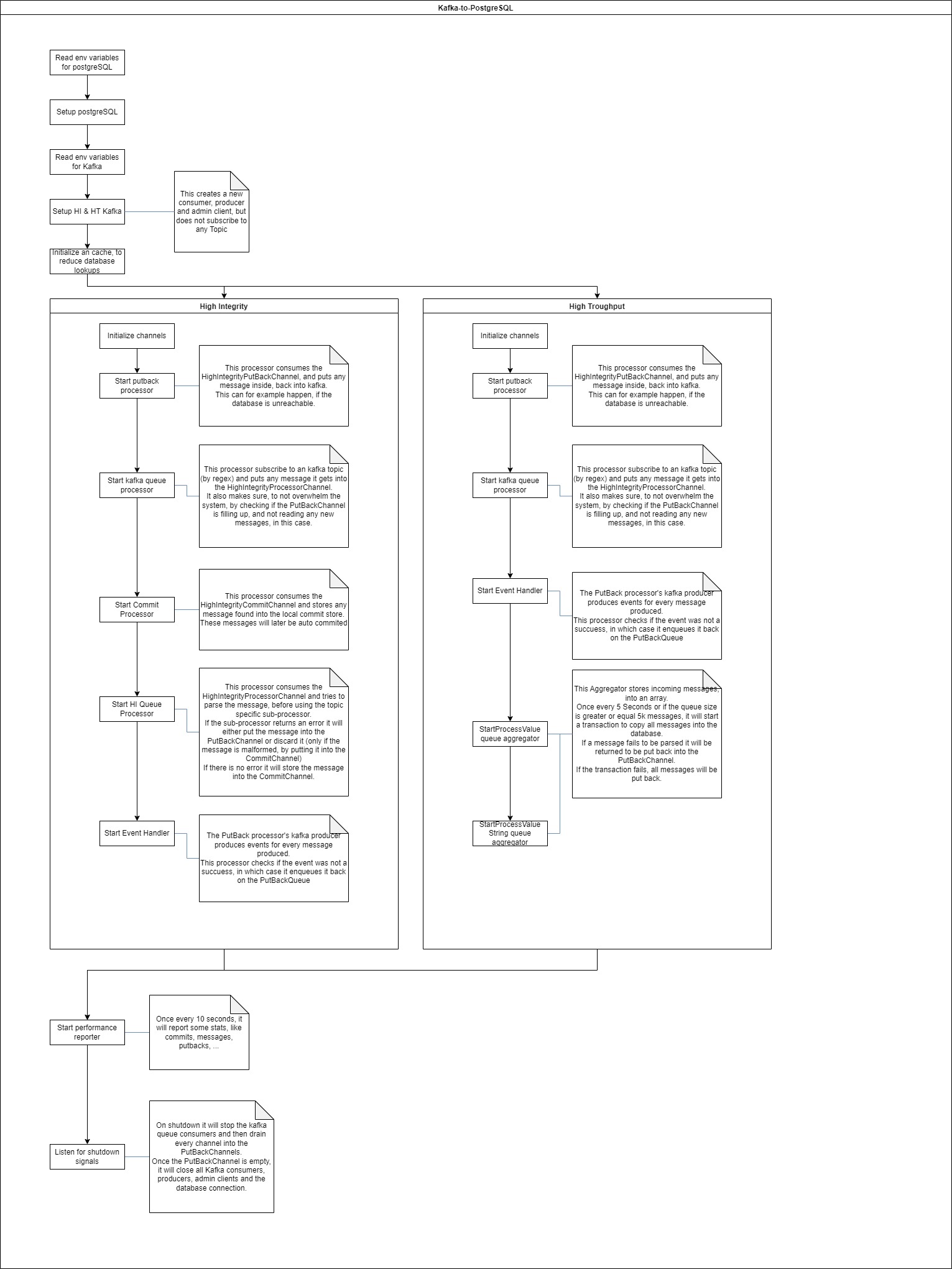

By default, kafka-to-postgresql sets up two Kafka consumers, one for the High Integrity topics and one for the High Throughput topics.

The graphic below shows the program flow of the microservice.

High integrity

The High integrity topics are forwarded to the database in a synchronous way. This means that the microservice will wait for the database to respond with a non error message before committing the message to the Kafka broker. This way, the message is garanteed to be inserted into the database, even though it might take a while.

Most of the topics are forwarded in this mode.

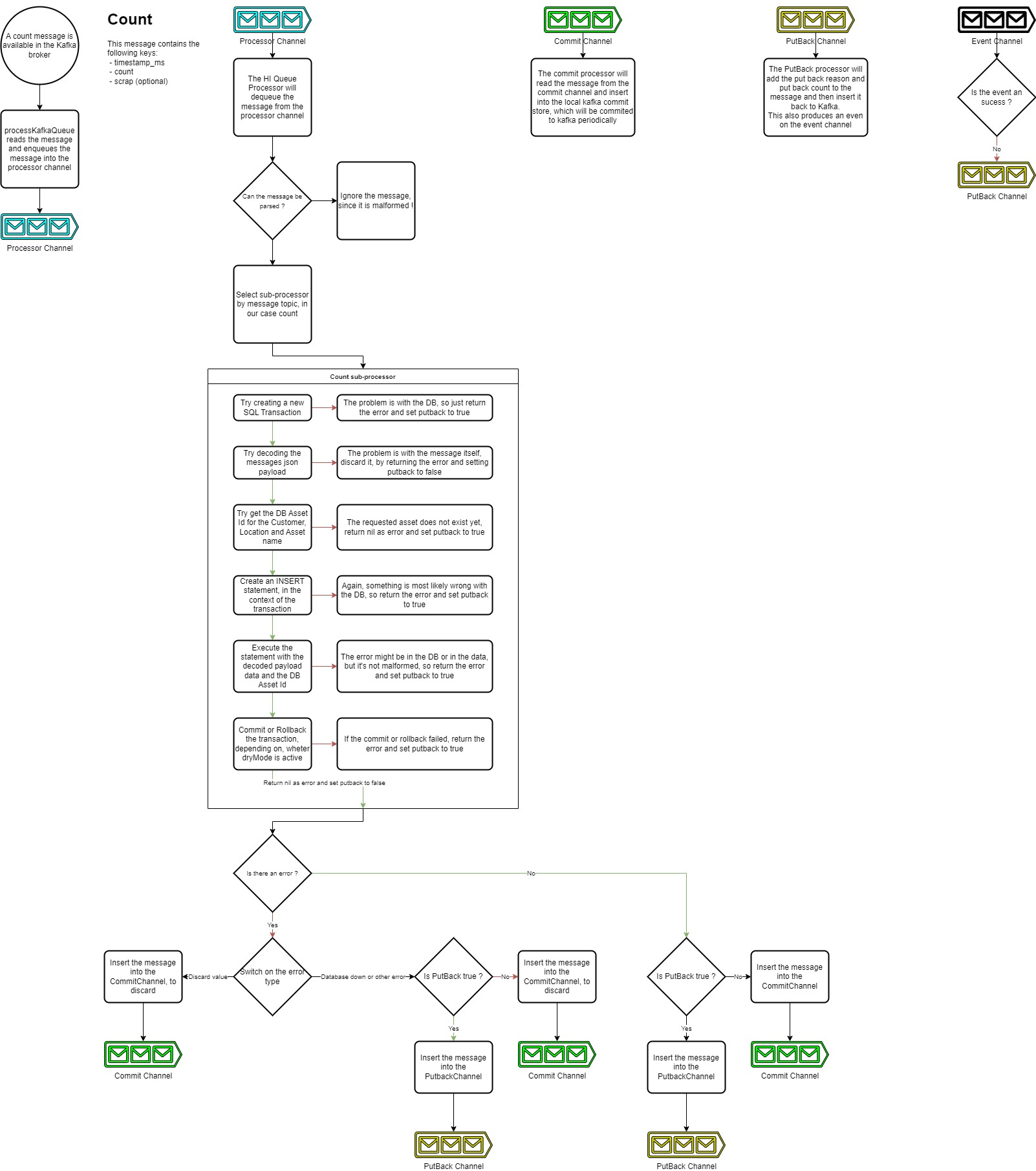

The picture below shows the program flow of the high integrity mode.

High throughput

The High throughput topics are forwarded to the database in an asynchronous way. This means that the microservice will not wait for the database to respond with a non error message before committing the message to the Kafka broker. This way, the message is not garanteed to be inserted into the database, but the microservice will try to insert the message into the database as soon as possible. This mode is used for the topics that are expected to have a high throughput.

The topics that are forwarded in this mode are processValue, processValueString and all the raw topics.

What’s next

- Read the Kafka to Postgresql reference documentation to learn more about the technical details of the Kafka to Postgresql microservice.